(Lucas Kovar and Michael Gleicher; SIGGRAPH '04)

PDF

Large motion data sets often contain many variants of the same

kind of motion, but without appropriate tools it is difficult to

fully exploit this fact. This paper provides automated methods

for identifying logically similar motions in a data set and using

them to build a continuous and intuitively parameterized space of

motions. To find logically similar motions that are numerically

dissimilar, our search method employs a novel distance metric to

find ``close'' motions and then uses them as intermediaries to

find more distant motions. Search queries are answered at

interactive speeds through a precomputation that compactly

represents all possibly similar motion segments. Once a set of

related motions has been extracted, we automatically register them

and apply blending techniques to create a continuous space of

motions. Given a function that defines relevant motion

parameters, we present a method for extracting motions from this

space that accurately possess new parameters requested by the

user. Our algorithm extends previous work by explicitly

constraining blend weights to reasonable values and having a

run-time cost that is nearly independent of the number of example

motions. We present experimental results on a test data set of

37,000 frames, or about ten minutes of motion sampled at 60

Hz.

(Hyun Joon Shin, Lucas Kovar, and Michael Gleicher; Pacific Graphics '03)

PDF

Most popular motion editing methods do not take physical principles into account, potentially producing physically implausible motions. We present a method for "touching up" motions to improve physical plausibility. Specifically, we first divide the motion into ground and flight stages and then enforce zero moment point constraints in the former and linear/angular momentum constraints in the latter. Unlike previous methods, we do not employ nonlinear optimization; rather, we use efficient closed form algorithms that allow a user to build a set of hierarchical displacement maps which refine user-specified degrees of freedom at different scales.

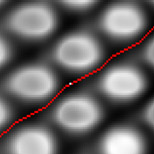

(Lucas Kovar and Michael Gleicher; SCA '03)

PDF

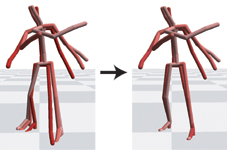

Many motion editing algorithms, including transitioning and

multitarget interpolation, can be represented as instances of a

more general operation called motion blending. We introduce a

novel data structure called a registration curve that

expands the class of motions that can be successfully blended

without manual input. Registration curves achieve this by

automatically determining relationships involving the timing,

local coordinate frame, and constraints of the input motions. We

show how registration curves improve upon existing automatic

blending methods and demonstrate their use in common blending

operations.

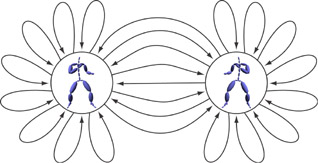

(Michael Gleicher, Hyun Joon Shin, Lucas Kovar, and Andrew Jepsen; I3D '03)

PDF

Many virtual environments and games must be populated with

synthetic characters to create the desired experience. These

characters must move with sufficient realism, so as not to destroy

the visual quality of the experience, yet be responsive,

controllable, and efficient to simulate. In this paper we present

an approach to character motion called Snap-Together Motion

that addresses the unique demands of virtual environments.

Snap-Together Motion preprocesses a corpus of motion capture

examples into a set of short clips that can be concatenated to

make continuous streams of motion. The result is a simple

graph structure that facilitates efficient planning of character

motions. A user-guided process selects ``common'' character poses

and the system automatically synthesizes multi-way transitions

that connect through these poses. In this manner well-connected

graphs can be constructed to suit a particular application,

allowing for practical interactive control without the effort of

manually specifying all transitions.

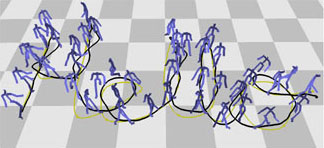

(Lucas Kovar, Michael Gleicher, and Fred Pighin; SIGGRAPH '02)

PDF

This work presents a novel method for creating realistic, controllable

motion. Given a corpus of motion capture data, we automatically

construct a directed graph called a motion graph that

encapsulates connections among the motions in a database. The motion graph consists both of pieces of original motion and automatically generated transitions. Motion can be generated simply by building walks on the graph. We present a general framework for extracting particular graph walks that meet a user's specifications. We then show how this framework can be applied to the specific problem of generating

different styles of locomotion along arbitrary paths.

(Lucas Kovar, John Schreiner, and Michael Gleicher; SCA '02)

PDF

While motion capture is commonplace in character animation, often the

raw motion data itself is not used. Rather, it is first fit onto a

skeleton and then edited to satisfy the particular demands of the

animation. This process can introduce artifacts into the motion. One

particularly distracting artifact is when the character's feet move

when they ought to remain planted, a condition known as footskate. In

this work we present a simple, efficient algorithm for removing

footskate. Our algorithm exactly satisfies footplant constraints

without introducing disagreeable artifacts.

(Lucas Kovar and Michael Gleicher; UIST '02)

PDF

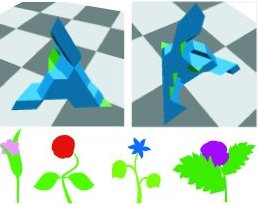

This work presents a method for helping artists make artwork

more accessible to casual users. We focus on the specific case of

drawings, showing how a small number of drawings can be transformed

into a richer object containing an entire family of similar drawings.

This object is represented as a simplicial complex approximating a set

of valid interpolations in configuration space. The artist does not

interact directly with the simplicial complex. Instead, she guides

its construction by answering a specially chosen set of yes/no

questions. By combining the topological generality of a simplicial

complex with direct human guidance, we are able to represent very

general constraints on membership in a family. The constructed

simplicial complex supports a variety of algorithms useful to an end

user, including random sampling of the space of drawings, constrained

interpolation between drawings, projection of another drawing into the

family, and interactive exploration of the family.

|