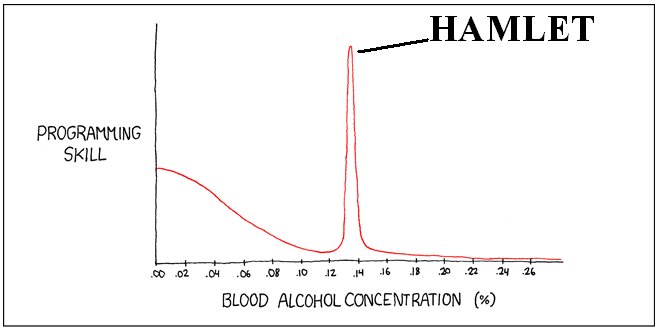

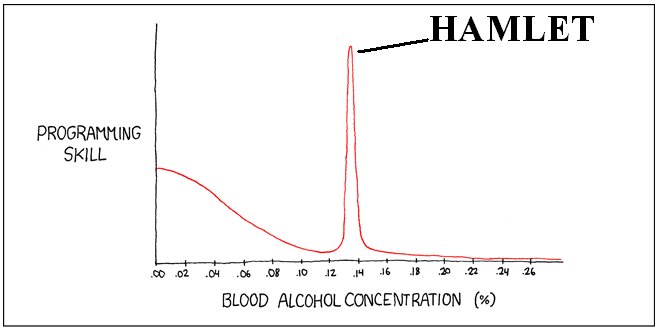

(Adapted from xkcd.com)

(Adapted from xkcd.com)

HAMLET Human, Animal, and Machine Learning: Experiment and Theory

Meetings: Fridays 3:45 p.m. - 5 p.m., the Berkowitz room (338 psychology) Schedule: Fall 2009

This student in your class keeps nodding and can recite everything you said. How do you know if he has truly learned the material, or is he simply overfitting your lecture? We offer a measure that combines computational learning theory and cognitive psychology to gauge human generalization abilities.

Information theory studies the "philosophy" of efficient data storage and reliable data communication. Its central objective is to identify and characterize provably good architectures for such systems. In this talk I'll try to give a sense of the foundational questions posed in information theory, discuss a bit about the tools and techniques involved, and convey the type of answers that result. Many of the questions involved -- what do we mean by "information", what is an "efficient" or "reliable" data storage or communication algorithm, is feedback helpful (not always) and how -- have resonance with familiar and analogous processes carried out by biological systems.

Imagine that an alien landed on Earth and heard a multitude of strange sounds coming from the planet's creatures. What would it make of this? Could it distinguish species based on their sounds? Perhaps all of Earth's animals would sound alike? Could it learn these auditory signals are forms of communication? How would it decipher them? What commonalities would it find among the varied inhabitants of this world? This talk examines how this kind of scenario can be addressed. We use a combination of machine learning, formal language theory, and signal processing to analyze vocalizations from a variety of animals. In doing so, we find remarkable commonalities across species drawn from different phylogenetic orders. This approach is a radical departure from traditional studies in animal vocalization, which rely on ad hoc analyses through human observation and manual signal manipulation. By combining linguistics, computer science, and information theory, we are able to gain new insights into universals between seemingly different species. This talk focuses on gibbons (Hylobates lar) and humans, and we additionally examine brown bats (Eptesicus fuscus) and several bird and cetacean species. Specifically, we find a surprising degree of agreement with modern theories of human linguistics and cognition. That language and cognitive science have focused almost exclusively on homo sapiens has inhibited examining possible universals in vocal communication and cognition. For example, phonology -- the notion that vocalizations are constructed from a finite repertoire of discrete units -- appears universal among the species we have studied. We also have found evidence for the existence of morphology -- distinct patterns of phonemes representing abstract concepts; namely, words are not uncommon in animal communication. We then examine how these complex units are strung together to form utterances that have semantic meaning. Finally, we close with a set of well-defined open questions. (This is joint work with Eric Raimy, Angela Dassow, and Esther Clarke.)

Humanlike robots might someday provide social and informational services such as storytelling, educational assistance, and companionship through complex, adaptive real-world interactions. For these systems to effectively offer such services, designers need to have a better understanding of people~Rs expectations of these systems and how these expectations might be met by carefully designing appropriate social behaviors that support humanlike features. In this talk, I will present an interdisciplinary process for designing social behavior for humanlike robots that draws on knowledge on human social behavior from the social sciences and findings from laboratory observations of social interactions. I will present a series of empirical studies that demonstrate how this process might be used to design social gaze behaviors for humanlike robots and how participants show social and cognitive improvements~Wparticularly, better recall of information, more conversational participation, and stronger rapport and attribution of mental states~Wled by theoretically based manipulations in the designed gaze behavior. I will also discuss some of the bottlenecks in developing robots that can offer complex social interactions and deliver value through real-world applications.

One challenge in artificial intelligence is to enable natural interactions between people and computers via multiple modalities. It is often desirable to convert information between modalities. One example is the conversion between text and speech using speech synthesis and speech recognition. However, such conversion is rare between other modalities. In particular, relatively little research has considered the transformation from general text to pictorial representations. This talk will discuss our efforts to develop general- purpose Text-to-Picture (TTP) synthesis algorithms that automatically generate pictures that try to convey the main meaning of one or more natural language sentences. TTP synthesis has many practical applications: a communication system equipped with this technology could turn signs and operating instructions into easy-to-understand graphical forms; combined with optical character recognition, a personal assistant device could create such visual translations on-the- �By without the help of a caretaker. TTP synthesis may also facilitate literacy development and rapid browsing of documents through pictorial summaries. Unlike prior systems that require hand-crafted narrative descriptions of a scene, our algorithms generate static or animated pictures that represent important objects, spatial relations, and actions for general text. Key components include extracting important information from text, generating corresponding images for each piece of information, composing the images into a coherent picture, and evaluation. Our approach uses statistical machine learning and draws ideas from automatic machine translation, text summarization, text-to- speech synthesis, computer vision, and graphics. In addition to introducing the TTP problem and describing two systems we have developed, this talk will discuss an educational application and related collaborative research with Psychology professor Dr. Art Glenberg. (This is joint work with Jerry Zhu, Chuck Dyer, and Jake Rosin in Computer Sciences.)

Over the course of the last century, many arguments (Dutch Books, representation theorems, etc.) have been offered for Bayesianism, the position that agents should have degrees of confidence satisfying the probability axioms. I'll suggest that one of the best reasons for Bayesianism (and one of the reasons it has become so popular) is its adeptness at modeling the subtle evidential relations involved in inductive reasoning. I'll lay out some general properties an evidential support relation should display (and some properties that may seem intuitive but that it shouldn't), and explain how Bayesianism does an excellent job of fitting the bill.

Xu and Tenenbaum recently put forward a new theory about how people learn the meanings of words. The theory suggests that word-learning can be likened to an optimal inference procedure in which the learner combines prior knowledge with new evidence to draw conclusions about the concept to which a new word refers. After encountering a new word paired with a recognizable object or set of objects, the learner computes, for each of a vast repertoire of stored concepts, the likelihood of observing the learning examples under the hypothesis that these have been sampled at random from the extension of the concept. These probabilities are combined with prior beliefs about the likelihood of each concept being a potential target for naming. Stored concepts are construed as discrete nodes residing within a hierarchical graph structure that is used to set the priors~Wthe ~Sbasic level~T concepts that reside near the middle of the hierarchy are given a higher prior probability than are more specific or more general concepts; and concepts that reside within the hierarchy are assumed to have a higher prior than concepts that violate it. Given the priors and the probabilities yielded by the data, the learner draws the rational conclusion and decides that the word refers to the concept with the highest posterior probability.

This theory makes predictions about human behavior, subsequently confirmed by experiment, that contradict some other common approaches to word learning. But, the view that concepts are discrete nodes organized within a hierarchical graph structure has historically been treated with skepticism for a variety of reasons. I will be talking about some of the problems I see with this framework, and will describe some new experiments that appear to disconfirm some predictions of the Xu-Tenenbaum theory. I am interested to understand how/whether extensions of the structured probabilistic approach can accommodate these results.

"Statistical learning" is a popular psychological explanation for how human learners come to understand complex patterns or relationships in the world. Theories of statistical learning tend to have two principle claims: (1) input from the environment is incredibly more rich with data than some imagine, and (2) human learners are much better at detecting this structure than people might think. Critics of statistical learning are fond of finding situations where these claims appear to be false, and that therefore a much richer and substantial representational and learning system must be invoked.

This talk will focus on two such situations where statistical learning has been claimed to be an insufficient explanation: (1) infants' word segmentation of nouns versus verbs, and (2) learning about non-adjacent relationships in a language. In a series of corpus analyses, as well as behavioral studies with infants and adults, I hope to show that in both of these situations, simple statistical learning mechanisms are more than capable of predicting learning - as long as one is open-minded about what the "units" are over which statistics are computed.

HAMLET mailing list

The MALBEC lectures ("Mathematics, Algorithms, Learning, Brains, Engineering, Computers")

Spring 2009 archive

Fall 2008 archive

Contact: Tim Rogers (ttrogers@wisc.ed), Jerry Zhu (jerryzhu@cs.wisc.ed) (Add 'u' to the addresses)

(Adapted from xkcd.com)

(Adapted from xkcd.com)