The Incremental Multiresolution Matrix Factorization Algorithm

Abstract:

Multiresolution analysis and matrix factorization are foundational tools in computer vision.

In this work, we study the interface between these two distinct topics and obtain techniques to uncover hierarchical block structure in symmetric matrices

-- an important aspect in the success of many vision problems.

Our new algorithm, the {\it incremental multiresolution matrix factorization}, uncovers such structure one feature at a time, and hence scales well to large matrices.

We describe how this multiscale analysis goes much farther than what a direct ``global'' factorization of the data can identify.

We evaluate the efficacy of the resulting factorizations for relative leveraging within regression tasks using medical imaging data.

We also use the factorization on representations learned by popular deep networks,

providing evidence of their ability to infer semantic relationships even when they are not explicitly trained to do so.

We show that this algorithm can be used as an exploratory tool to improve the network architecture, and within numerous other settings in vision.

The two publications corresponding to Incremental MMF are as follows:

V. K. Ithapu, Decoding the Deep: Exploring Class Hierarchies of Deep Representations using Multiresolution

Matrix Factorization,

Explainable Computer Vision Workshop (ECVW), CVPR 2017 [PDF]

V. K. Ithapu, R. Kondor, S. C. Johnson, V. Singh, The Incremental Multiresolution Matrix Factorization Algorithm,

Computer Vision and Pattern Recognition (CVPR), 2017 [PDF]

The MATLAB scripts for incremental MMF can be found at this link.

The scripts are being improved upon with additional d3 visualiations.

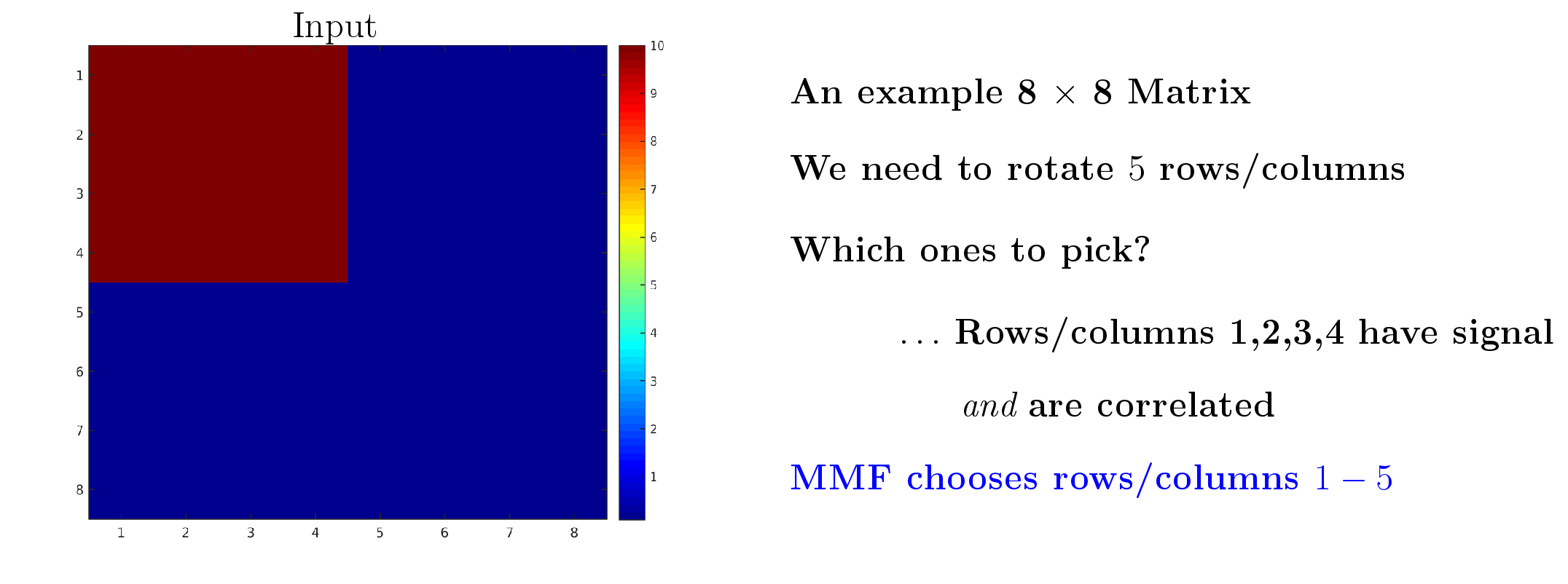

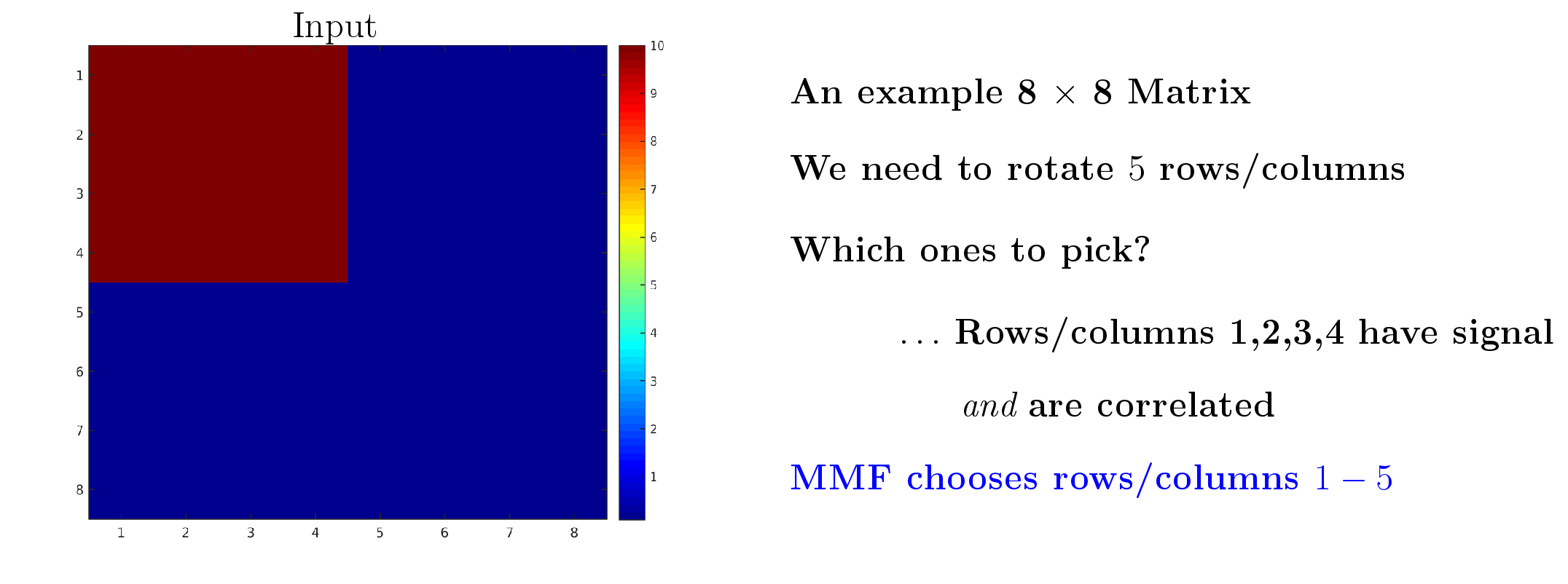

What is Multiresolution Matrix Factorization doing to a symmetric Matrix?

Example 5^th order MMF on an 8 x 8 matrix -- Move the slider from left to right

Let us now look at some interesting relationships inferred by the algorithm on several categories from ImageNet.

An accompanying d3-based visualization is under preparation.

Beyond the following visualizations, check out

1. Comparing Human and Deep Semantics using MMF Human_vs_Deep

2. Comparing representational structure across GoogLeNet, ResNet and VGG-S Compare_via_MMFs

The hierarchy of ImageNet representations coming from GoogLe Network

We will now visualize MMF compositions corresponding to 5 different sets of ImageNet classes/categories -- each of which includes objects/things belonging to a specific genre.

For each set, the last hidden layer that feeds to softmax representations from the GoogLe network are used to construct the class-by-class covariance, upon which a 5^th order MMF graph is constructed.

The names of the different classes involved, the corresponding visualizations and some interesting inference about the compositions/hierarchy are presented here below (the first visualization is the one reported in Figure 4 in the main paper).

12 classes: bannock, chapati, pita, salad, salsa, saute, ketchup, chutney, limpa, strawberry, margarine, shortcake

The breads appear at first level/composition. The different sides and deserts follows at later levels. See Section 4.3 in main paper for exhaustive discussion on this hierarchy.

10 classes: cow, insectivore, hound, puppy, garden spider, ptarmigan, phalanger, killer whale, green lizard, kangaroo

This is a versatile set of classes i.e., the contextual information (like background, typical sets of objects that are found etc.) for cow, hound may be drastically different from kangaroo, ptarmigan.

Clearly, the most distinct classes -- green lizard, killer whale are resolved at highest levels. The composition at first level involves those classes which share context -- there is most certainly grass or trees ot bark in these images.

Given the first level, the graph shows that kangaroo is most expressible by the first level composition, compared to green lizard -- implying a clear sense of hierarchy in learned representations.

11 classes: male horse, war horse, pony, mule, elk, blackbuck, deer, goat, bison, sheep, zebra

The categories that MMF picked for first level compositions are highly correlated to begin with, both with respect to the texture/pixel-values as well as the background (most show up with grassy or landscape background).

It is reasonable to say that human perception would imply that blackbuck, deer, goat are most related to the first level compositions -- which were composed at the next level.

zebra clearly is the most distnct one, implying that many compositions of the first and second level categories may not really infer what constitutes a zebra.

12 classes: rule, rack, squach, stick, ski, sheet, couch, table, sail, roller, pot, toilet seat

8 of these 12 classes are sports accessories/objects and the rest (pot, couch, sheet, toilet seat) are more household-type categories.

This was done to see what the MMF will do, if it is forced to pick hierarchy among arbitrary classes. Clearly, pot shows up at last level.

The first level compositions are 5 of the sports related classes. Interestingly couch is closer in composition to the first lvel than a roller coaster or sail, which may be because the context of four of the first level

classes is that they are `indoors'. sail and roller coaster have sky and water as background respectively, making them distinct enough from the first level composition, and pushing couch or toilet seat instead.

10 classes: bag, bathtub, basket, blackboard, box, bench, building, bottle, ball, basketball

This set includes 5 different household/kitchen classes and at least two outlier classes -- ball and basketball -- which belong to sports.

Clearly, they showed up at last level of the factorization. The first level composition is most informing bench and building rather than bottle, which is interesting.

Many of the type of inferences made with earlier set of 12 classes (and its visualization) still hold here, expecially those with respect to outlier/peculier classes that may not directly relate to the rest of the bunch on which MMF is operating.

The PDF document available in this link is the accompanying supplementary information for the main paper.

It contains the following details.

- The derivation of MMF decomposition error (Eq. 3 from the paper)

- Complete details of the datasets used in our experiments.

- All the simulations comparing Incremental MMF and the corresponding batch-wise versions (Section 4.1).

- Error comparisons, and

- Speed-ups

- All the experiments (and relevant plots) from Section 4.2 (MMF Scores) and Figure 3.

- Hierarchical clustering (baseline) compositions of the 12 classes from Figure 4 (see Section 4.3.2)