Zhenmei ShiPh.D.

Computer Sciences

Google Scholar | Github | LinkedIn | CV |

|

About Me

I am a Ph.D. candidate in Computer Science at the University of Wisconsin-Madison advised by Yingyu Liang. I obtained my B.S. degree in Computer Science and Pure Mathematics Advanced, from the Hong Kong University of Science and Technology in 2019.

Currently, I am an AI Research Scientist Intern in summer 2024 at Salesforce, Palo Alto, working with Shafiq Joty and Huan Wang. Recently, I was a Research Scientist Intern at Adobe, Seattle, working with Zhao Song and Jiuxiang Gu. Previously, I had two internships at Google YouTube Ads Machine Learning team and Google Pixel Camera team, Mountain View; one internship at Megvii (Face++) Foundation Model team, Beijing; and two internships at Tencent YouTu team, Shenzhen.

My research interest mainly focuses on Understanding the learning and adaptation of Foundation Models, including Large Language Models, Vision Language Models, Diffusion Models, Shallow Networks, and so on. Feel free to contact me for collaboration.

Publications

* denotes equal contribution or alphabetical order.

|

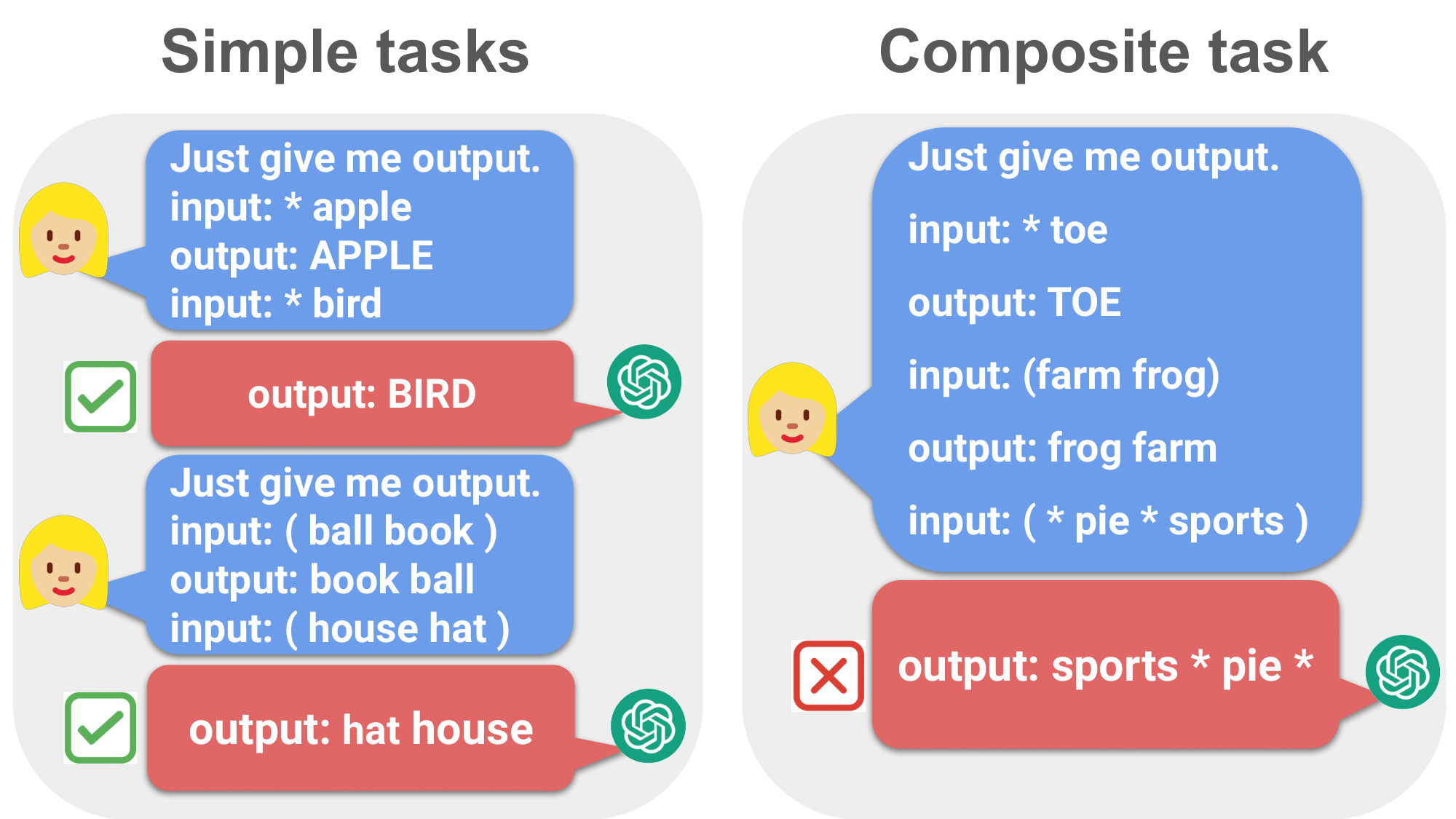

Do Large Language Models Have Compositional Ability? An Investigation into Limitations and Scalability

Zhuoyan Xu*, Zhenmei Shi*, Yingyu Liang COLM 2024 [ OpenReview ] [ arXiv ] [ Workshop ] [ Code ] [ Slides ] [ Poster ] |

|

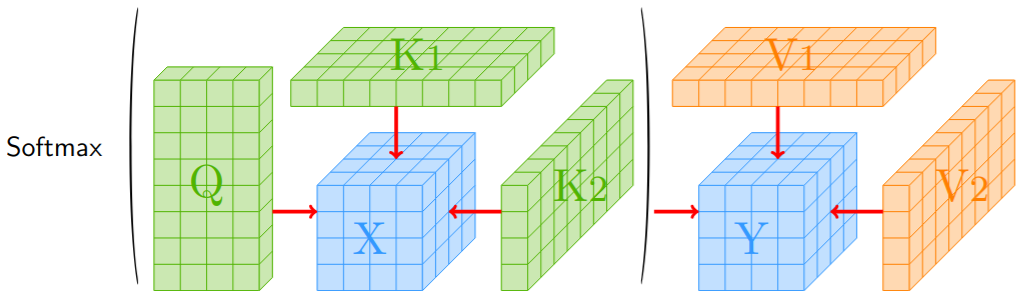

Differential Privacy of Cross-Attention with Provable Guarantee

Jiuxiang Gu*, Yingyu Liang*, Zhenmei Shi*, Zhao Song*, Yufa Zhou* arXiv, 2024 [ arXiv ] |

|

Differential Privacy Mechanisms in Neural Tangent Kernel Regression

Jiuxiang Gu*, Yingyu Liang*, Zhizhou Sha*, Zhenmei Shi*, Zhao Song* arXiv, 2024 [ arXiv ] |

|

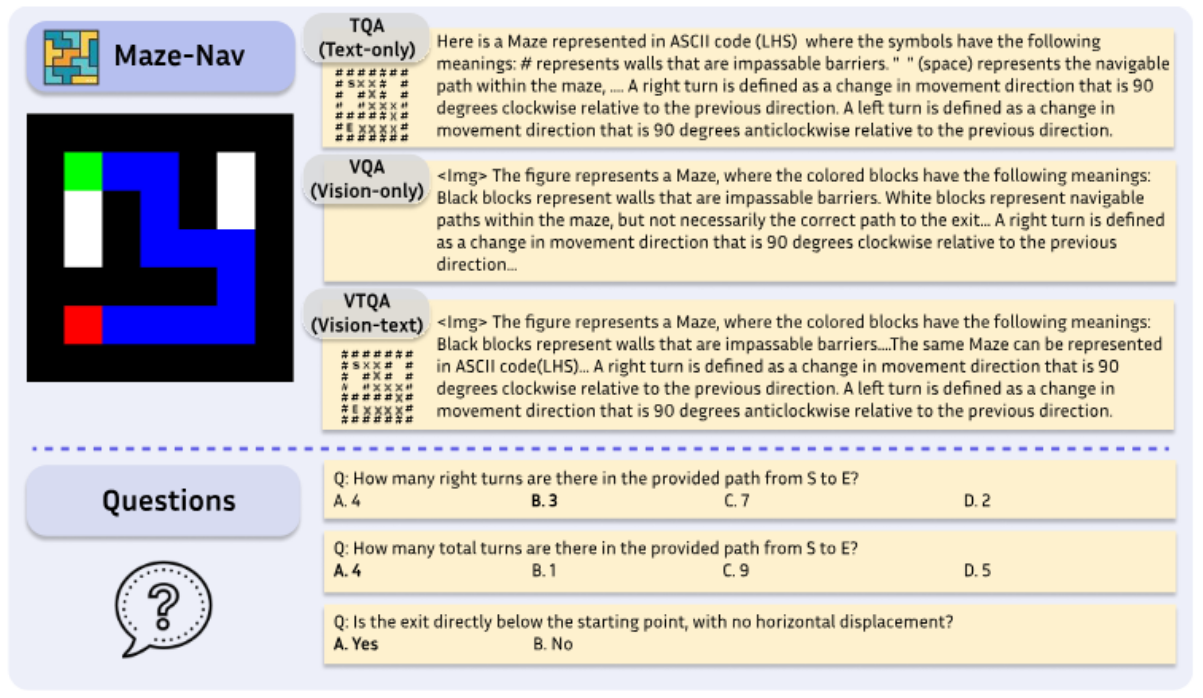

Is A Picture Worth A Thousand Words? Delving Into Spatial Reasoning for Vision Language Models

Jiayu Wang, Yifei Ming, Zhenmei Shi, Vibhav Vineet, Xin Wang, Neel Joshi arXiv, 2024 [ arXiv ] |

|

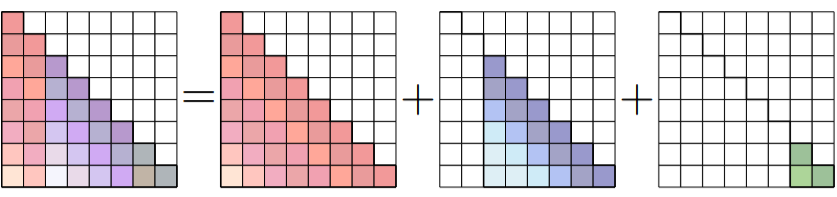

Toward Infinite-Long Prefix in Transformer

Jiuxiang Gu*, Yingyu Liang*, Zhenmei Shi*, Zhao Song*, Chiwun Yang* arXiv, 2024 [ arXiv ] [ Code ] |

|

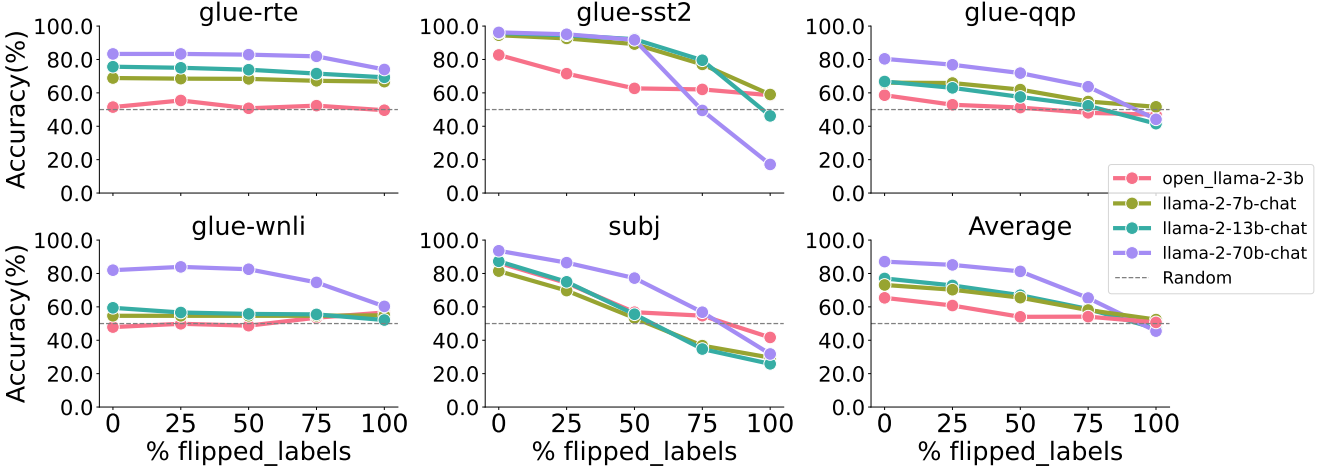

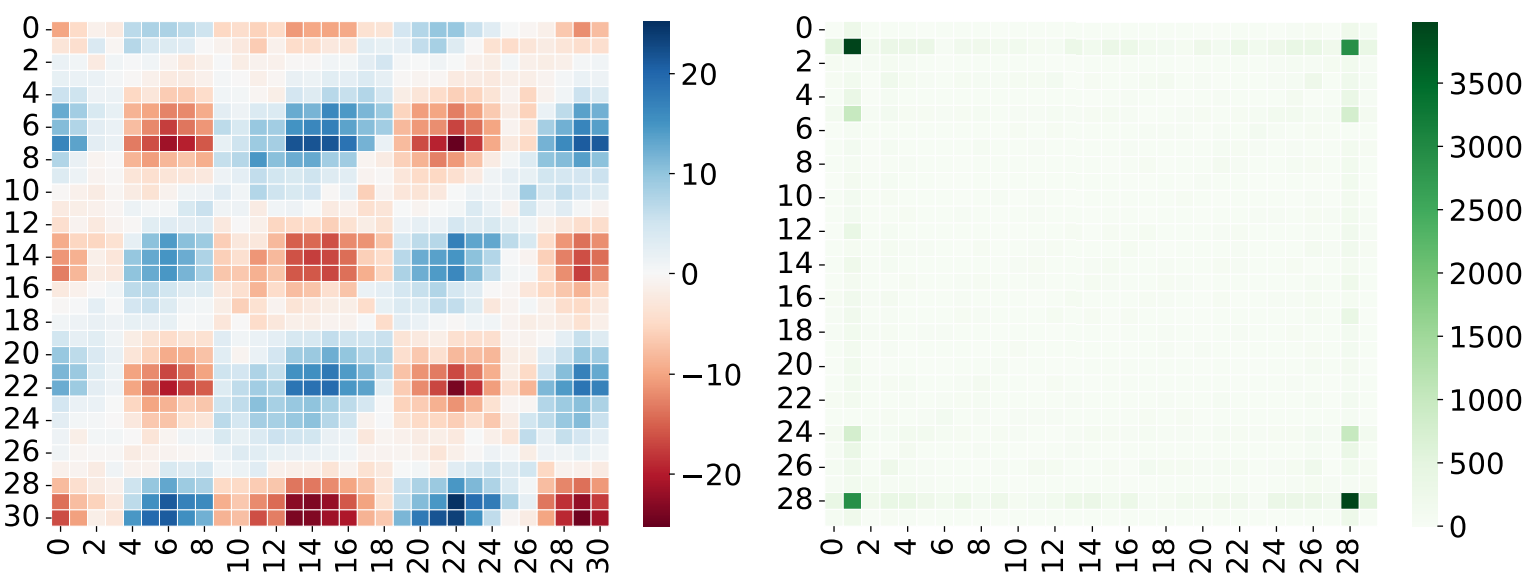

Why Larger Language Models Do In-context Learning Differently?

Zhenmei Shi, Junyi Wei, Zhuoyan Xu, Yingyu Liang ICML 2024 [ Openreview ] [ arXiv ] [ Poster ] [ Workshop ] [ Workshop Poster ] |

|

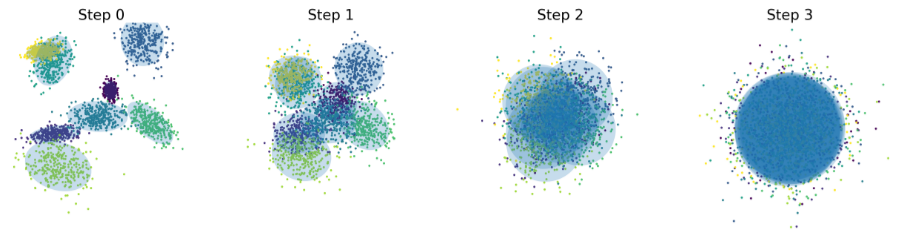

Unraveling the Smoothness Properties of Diffusion Models: A Gaussian Mixture Perspective

Jiuxiang Gu*, Yingyu Liang*, Zhenmei Shi*, Zhao Song*, Yufa Zhou* arXiv, 2024 [ arXiv ] |

|

Tensor Attention Training: Provably Efficient Learning of Higher-order Transformers

Jiuxiang Gu*, Yingyu Liang*, Zhenmei Shi*, Zhao Song*, Yufa Zhou* arXiv, 2024 [ arXiv ] |

|

Conv-Basis: A New Paradigm for Efficient Attention Inference and Gradient Computation in Transformers

Jiuxiang Gu*, Yingyu Liang*, Heshan Liu*, Zhenmei Shi*, Zhao Song*, Junze Yin* arXiv, 2024 [ arXiv ] |

|

Exploring the Frontiers of Softmax: Provable Optimization, Applications in Diffusion Model, and Beyond

Jiuxiang Gu*, Chenyang Li*, Yingyu Liang*, Zhenmei Shi*, Zhao Song* arXiv, 2024 [ arXiv ] |

|

Fourier Circuits in Neural Networks: Unlocking the Potential of Large Language Models in Mathematical Reasoning and Modular Arithmetic

Jiuxiang Gu*, Chenyang Li*, Yingyu Liang*, Zhenmei Shi*, Zhao Song*, Tianyi Zhou* ICLR 2024 Workshop [ OpenReview ] [ arXiv ] [ Poster ] |

|

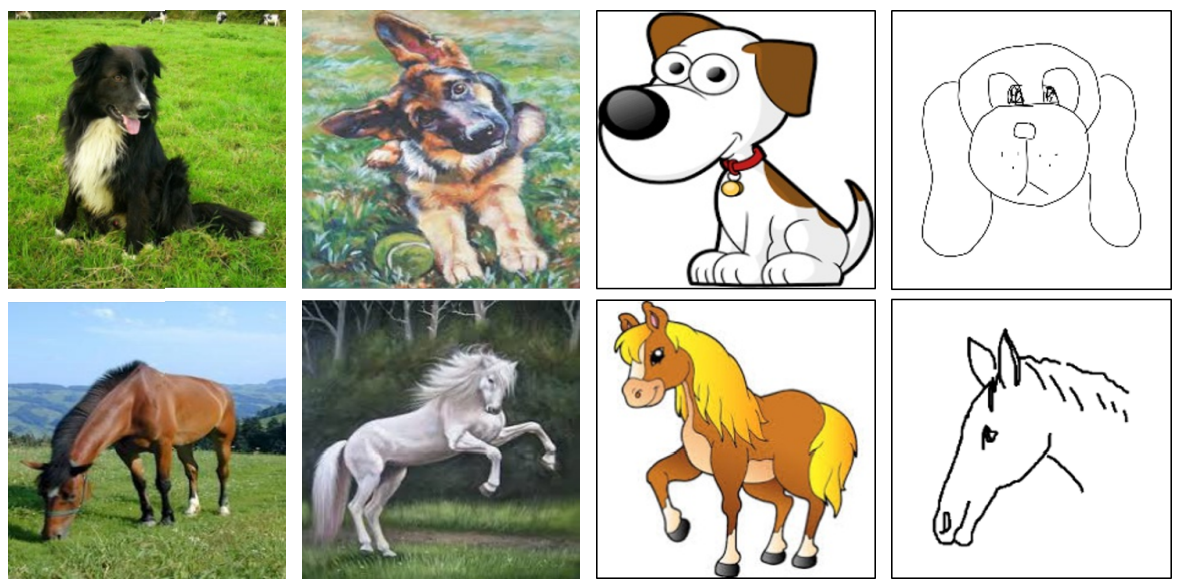

Towards Few-Shot Adaptation of Foundation Models via Multitask Finetuning

Zhuoyan Xu, Zhenmei Shi, Junyi Wei, Fangzhou Mu, Yin Li, Yingyu Liang ICLR 2024 [ OpenReview ] [ arXiv ] [ Code ] [ Slides ] [ Poster ] [ Video ] [ Workshop ] [ Workshop Poster ] [ Workshop Slides ] |

|

Domain Generalization via Nuclear Norm Regularization

Zhenmei Shi*, Yifei Ming*, Ying Fan*, Frederic Sala, Yingyu Liang CPAL 2024 Oral [ OpenReview ] [ arXiv ] [ Poster ] [ Code ] [ Slides ] [ Workshop ] [ Workshop Poster ] |

|

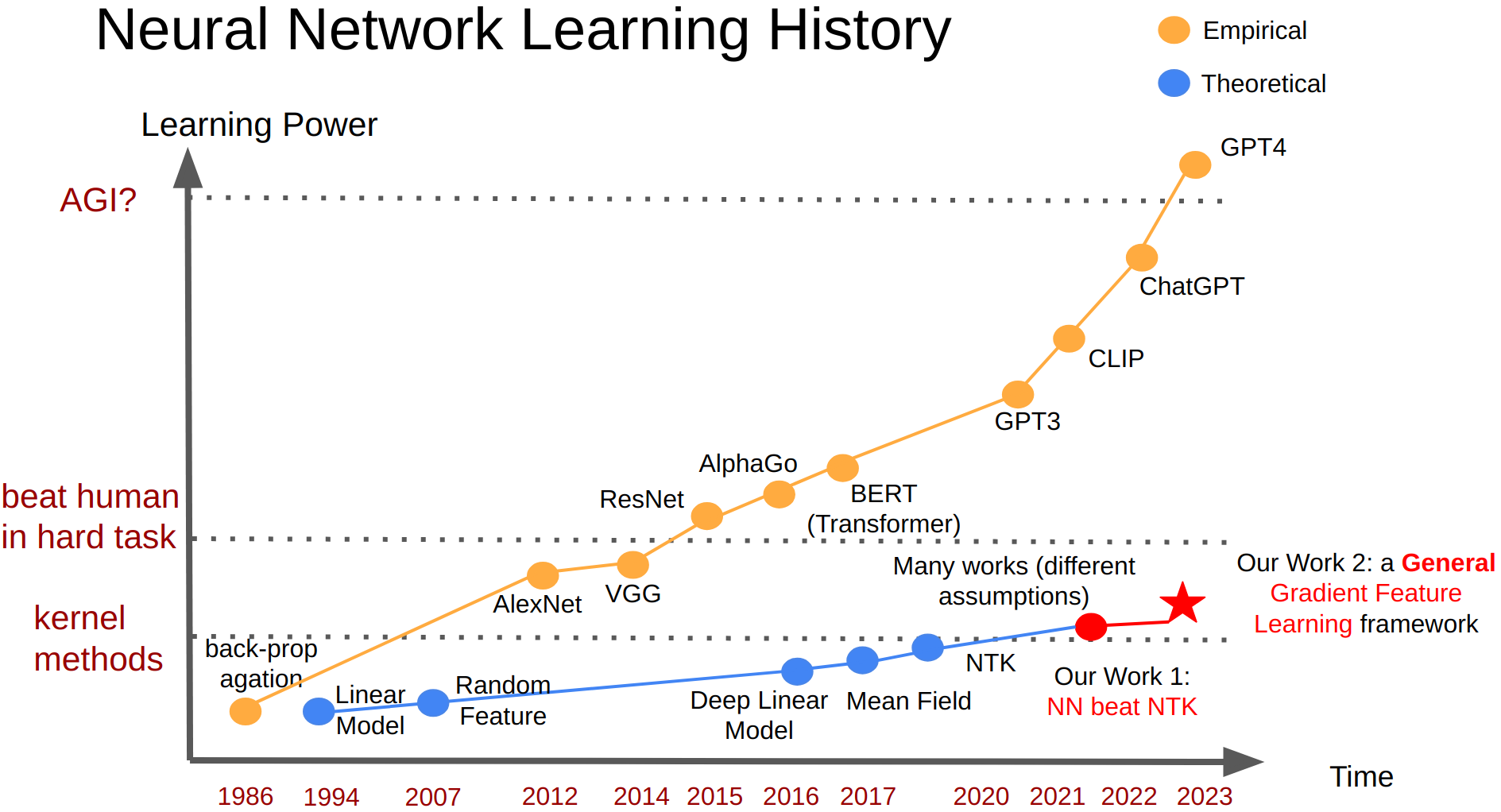

Provable Guarantees for Neural Networks via Gradient Feature Learning

Zhenmei Shi*, Junyi Wei*, Yingyu Liang NeurIPS 2023 [ OpenReview ] [ arXiv ] [ Video ] [ Slides ] [ Poster ] |

|

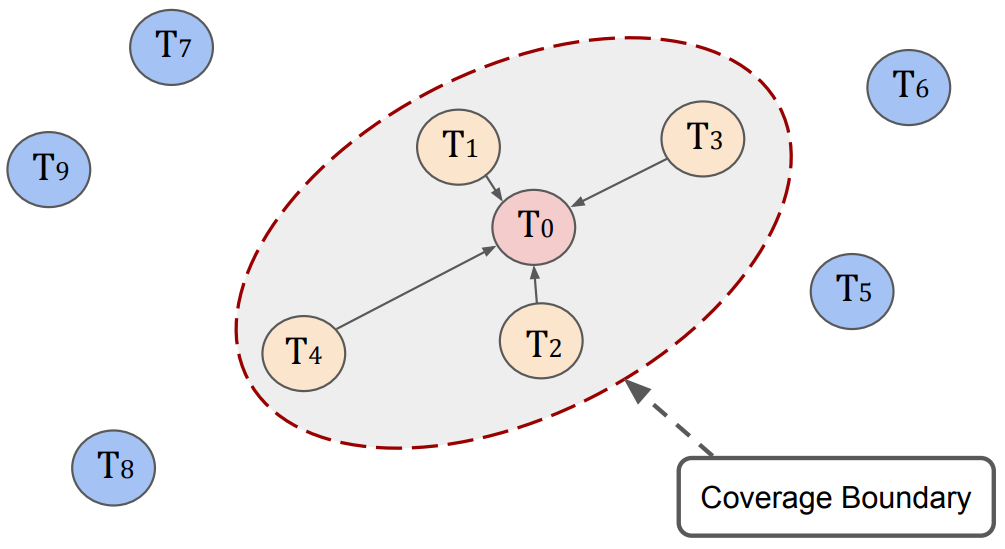

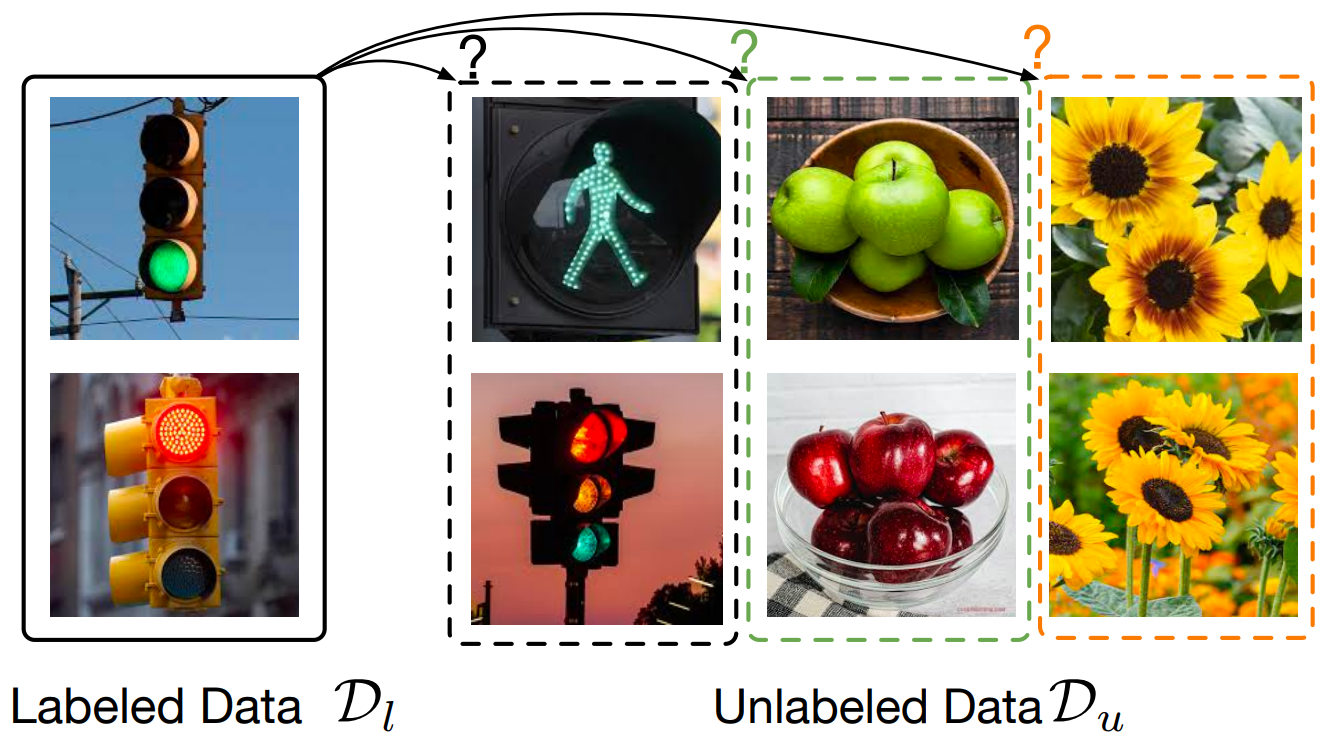

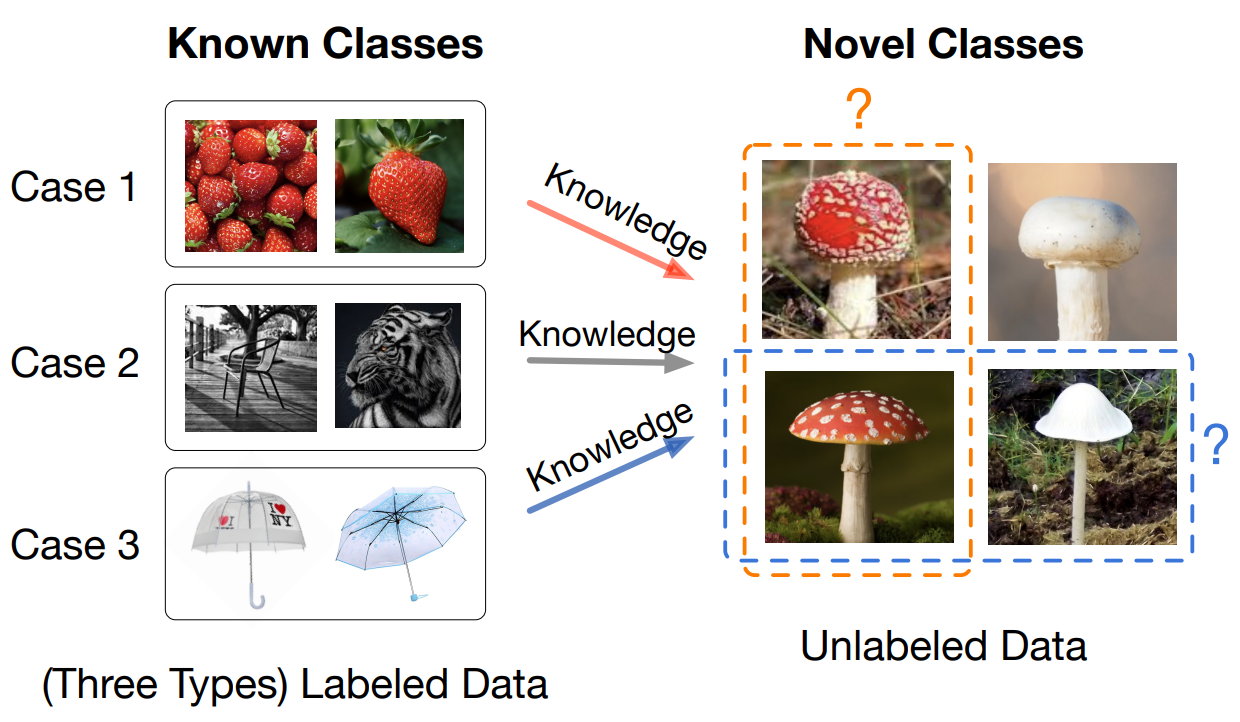

A Graph-Theoretic Framework for Understanding Open-World Semi-Supervised Learning

Yiyou Sun, Zhenmei Shi, Yixuan Li NeurIPS 2023 Spotlight [ OpenReview ] [ arXiv ] [ Video ] [ Code ] [ Slides ] |

|

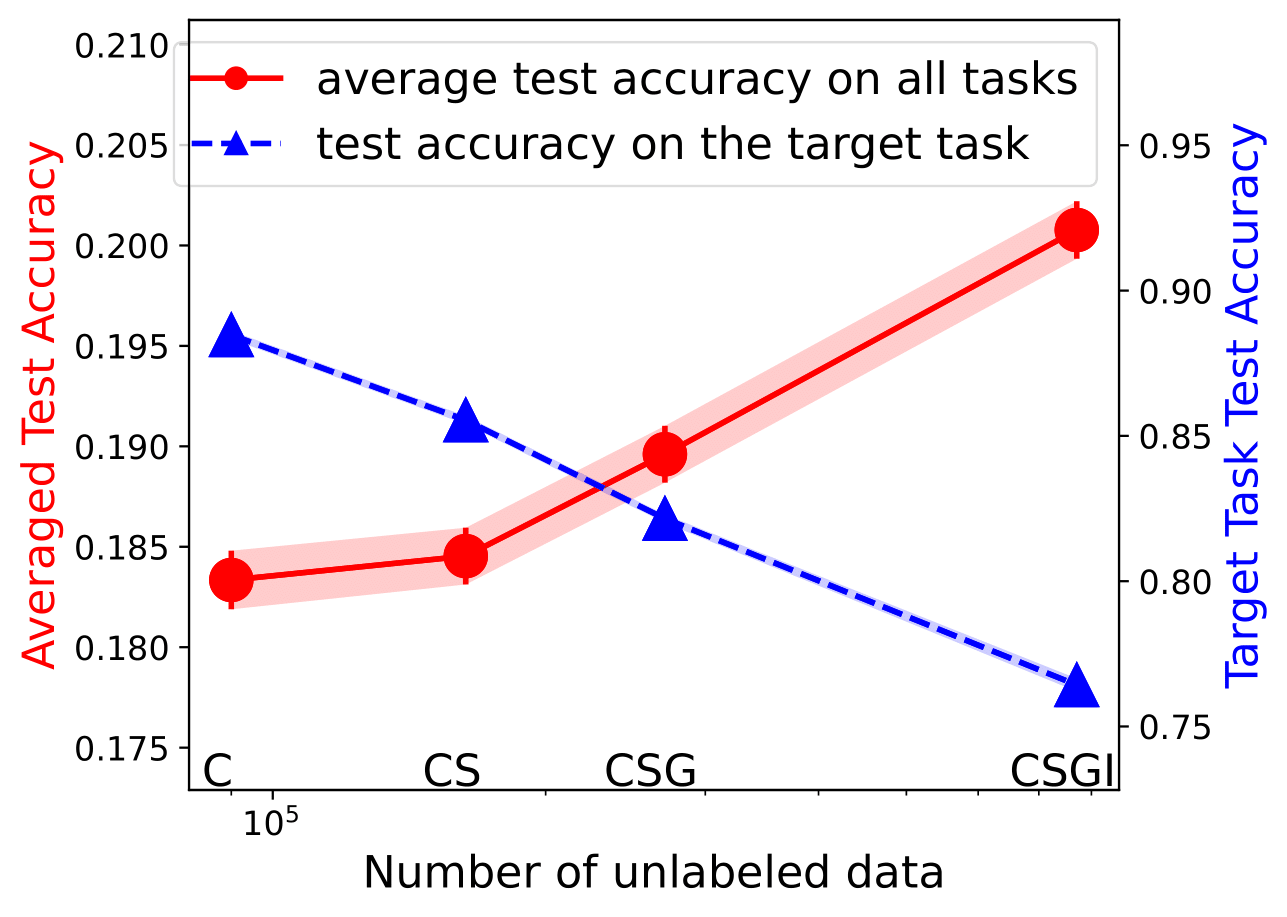

When and How Does Known Class Help Discover Unknown Ones? Provable Understandings Through

Spectral Analysis

Yiyou Sun, Zhenmei Shi, Yingyu Liang, Yixuan Li ICML 2023 [ OpenReview ] [ arXiv ] [ Video ] [ Code ] |

|

The Trade-off between Universality and Label Efficiency of Representations from Contrastive

Learning

Zhenmei Shi*, Jiefeng Chen*, Kunyang Li, Jayaram Raghuram, Xi Wu, Yingyu Liang, Somesh Jha ICLR 2023 Spotlight (Accept Rate: 7.95%) [ OpenReview ] [ arXiv ] [ Poster ] [ Code ] [ Slides ] [ Video ] [ Workshop ] [ Workshop Poster ] |

|

A Theoretical Analysis on Feature Learning in Neural Networks: Emergence from Inputs and

Advantage over Fixed

Features

Zhenmei Shi*, Junyi Wei*, Yingyu Liang ICLR 2022 [ OpenReview ] [ arXiv ] [ Poster ] [ Code ] [ Slides ] [ Video ] |

|

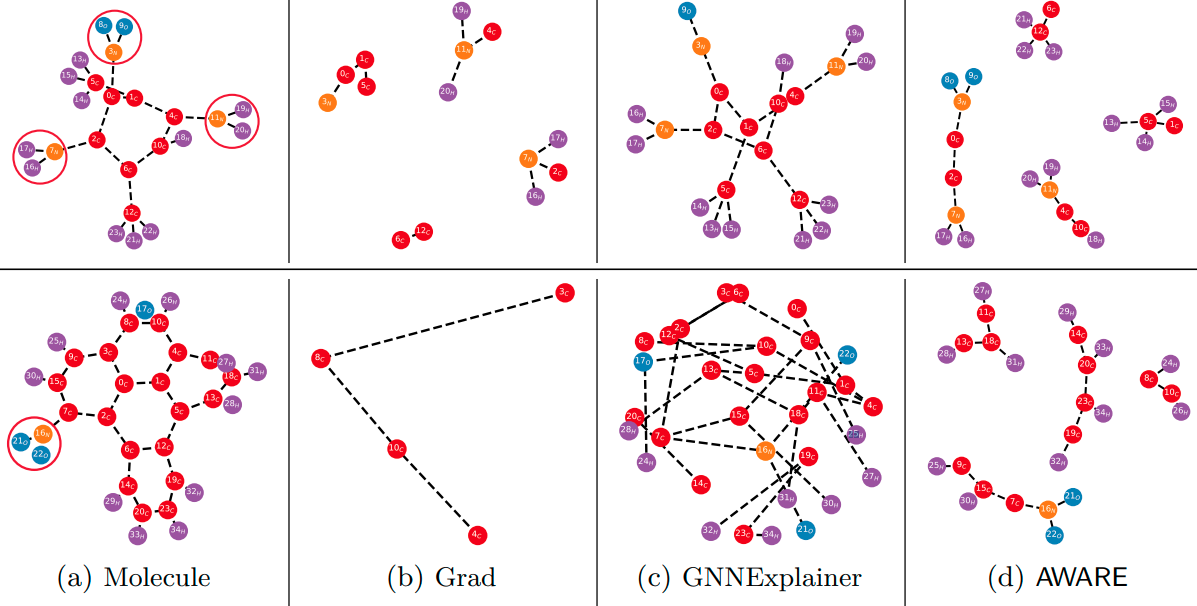

Attentive Walk-Aggregating Graph Neural Networks

Mehmet F. Demirel, Shengchao Liu, Siddhant Garg, Zhenmei Shi, Yingyu Liang TMLR 2022 [ OpenReview ] [ arXiv ] [ Code ] |

|

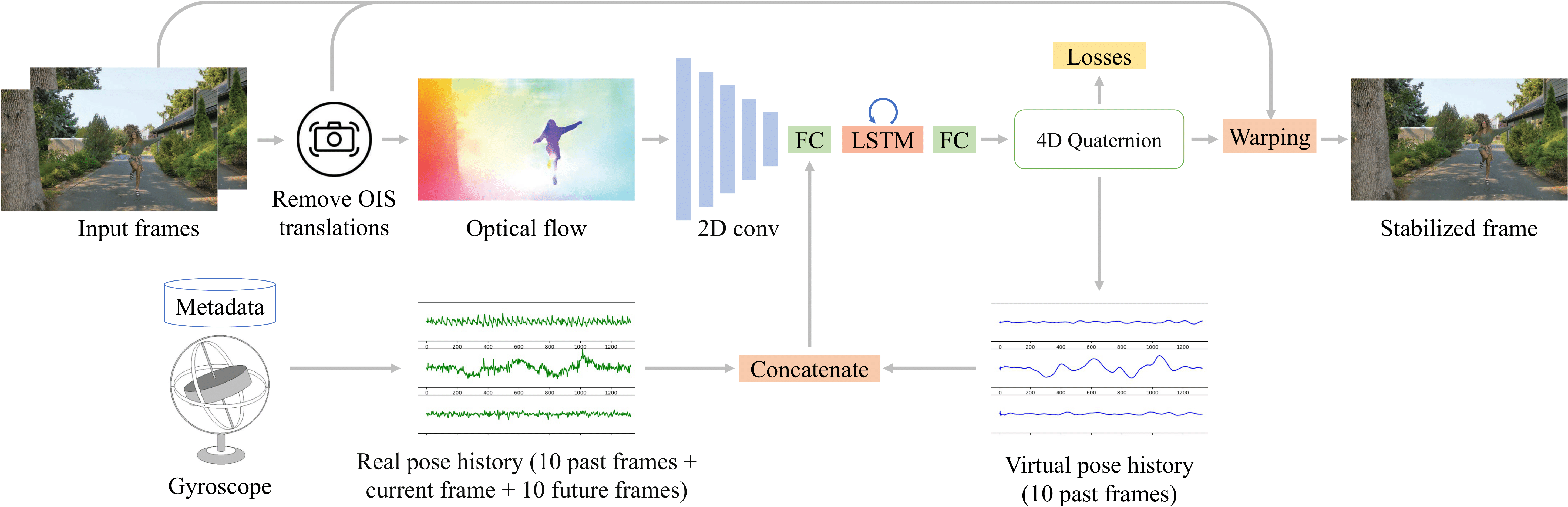

Deep Online Fused Video Stabilization

Zhenmei Shi, Fuhao Shi, Wei-Sheng Lai, Chia-Kai Liang, Yingyu Liang WACV 2022 [ Paper ] [ arXiv ] [ Poster ] [ Project ] [ Code ] [ Dataset ] |

|

Structured Feature Learning for End-to-End Continuous Sign Language Recognition Zhaoyang Yang*, Zhenmei Shi*, Xiaoyong Shen, Yu-Wing Tai arXiv, 2019 [ arXiv ] [ News ] |

|

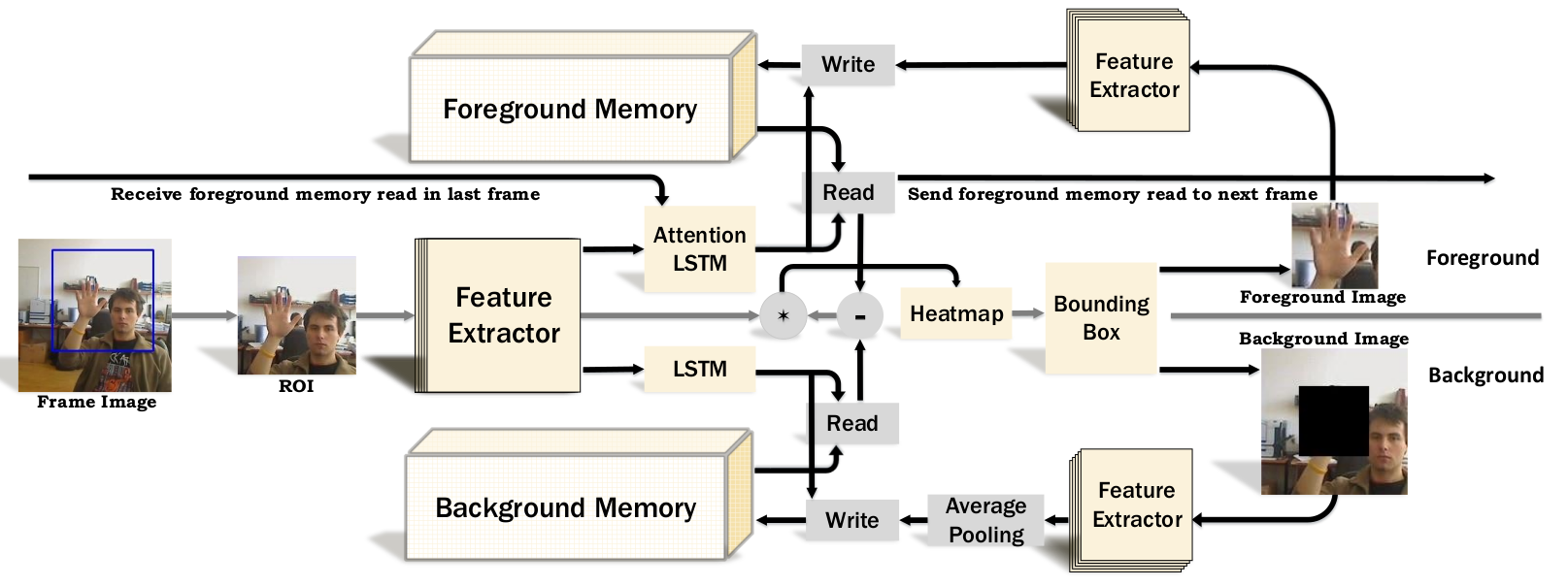

Dual Augmented Memory Network for Unsupervised Video Object Tracking

Zhenmei Shi*, Haoyang Fang*, Yu-Wing Tai, Chi Keung Tang arXiv, 2019 [ arXiv ] [ Project ] |

Technical Projects

|

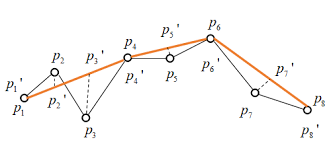

Velocity Vector Preserving Trajectory Simplification

Technical Report, 2018

[ Paper ] [ Code ] |

|

Deep Colorization, 2018.

[ News ] |

Research & Work Experience

|

Research Assistant

University of Wisconsin-Madison 2019 - Now | Yingyu Liang |

|

AI Research Scientist Intern

Salesforce Summer 2024 | Shafiq Joty and Huan Wang. |

|

Research Scientist Intern

Adobe Spring 2024 | Zhao Song and Jiuxiang Gu |

|

Software Engineering Intern

Google in Mountain View, CA Summer 2021 | Myra Nam Summer 2020 | Fuhao Shi |

|

Research Intern

Megvii (Face++) in Beijing Summer 2019 | Xiangyu Zhang |

|

Research Intern

Tencent YouTu in Shenzhen Winter 2019 | Zhaoyang Yang and Yu-Wing Tai Winter 2018 | Xin Tao and Yu-Wing Tai |

|

Research Assistant

Hong Kong University of Science and Technology 2018 - 2019 | Chi Keung Tang 2017 - 2018 | Raymond Wong Summer 2016 | Ji-Shan Hu |

|

Research Intern

Oak Ridge National Laboratory in the USA Summer 2017 | Cheng Liu and Kwai L. Wong |

Academic Services

Conference Reviewer at ICLR 2022-2024, NeurIPS 2022-2024, ICML 2022 and 2024, ICCV 2021-2023, CVPR 2021-2022, ECCV 2020-2022, WACV 2022Journal Reviewer at JVCI, IEEE Transactions on Information Theory

Teaching

Teaching Assistant of CS220 (Data Programming I) at UW-Madison (Spring 2020)Teaching Assistant of CS301 (Intro to Data Programming) at UW-Madison (Fall 2019)

Last updated: July 23, 2024