Prev: W1, Next: W3

Zoom: Link, TopHat: Link (341925), GoogleForm: Link, Piazza: Link, Feedback: Link.

Slide:

# Unsupervised Learning

📗 Supervised learning: \(\left(x_{1}, y_{1}\right), \left(x_{2}, y_{2}\right), ..., \left(x_{n}, y_{n}\right)\).

📗 Unsupervised learning: \(\left(x_{1}\right), \left(x_{2}\right), ..., \left(x_{n}\right)\).

➩ Clustering: separates items into groups.

➩ Novelty (outlier) detection: finds items that are different (two groups).

➩ Dimensionality reduction: represents each item by a lower dimensional feature vector while maintaining key characteristics.

📗 Unsupervised learning applications:

➩ Google news.

➩ Google photo.

➩ Image segmentation.

➩ Text processing.

➩ Data visualization.

➩ Efficient storage.

➩ Noise removal.

# High Dimensional Data

📗 Text and image data are usually high dimensional: Link.

➩ The number of features of bag of words representation is the size of the vocabulary.

➩ The number of features of pixel intensity features is the number of pixels of the images.

📗 Dimensionality reduction is a form of unsupervised learning since it does not require labeled training data.

# Principal Component Analysis

📗 Principal component analysis rotates the axes (\(x_{1}, x_{2}, ..., x_{m}\)) so that the first \(K\) new axes (\(u_{1}, u_{2}, ..., u_{K}\)) capture the directions of the greatest variability of the training data. The new axes are called principal components: Link, Wikipedia.

➩ Find the direction of the greatest variability, \(u_{1}\).

➩ Find the direction of the greatest variability that is orthogonal (perpendicular) to \(u_{1}\), say \(u_{2}\).

➩ Repeat until there are \(K\) such directions \(u_{1}, u_{2}, ..., u_{K}\).

TopHat Discussion

ID:📗 [1 points] Given the following dataset, find the direction (click on the diagram below to change the direction) in which the variation is the largest.

Projected variance:

Direction:

Projected points:

TopHat Discussion

ID:📗 [1 points] Given the following dataset, find the direction (click on the diagram below to change the direction) in which the variation is the largest.

Projected variance:

Direction:

Projected points:

# Geometry

📗 A vector \(u_{k}\) is a unit vector if it has length 1: \(\left\|u_{k}\right\|^{2} = u^\top_{k} u_{k} = u_{k 1}^{2} + u_{k 2}^{2} + ... + u_{k m}^{2} = 1\).

📗 Two vectors \(u_{j}, u_{k}\) are orthogonal (or uncorrelated) if \(u^\top_{j} u_{k} = u_{j 1} u_{k 1} + u_{j 2} u_{k 2} + ... + u_{j m} u_{k m} = 0\).

📗 The projection of \(x_{i}\) onto a unit vector \(u_{k}\) is \(\left(u^\top_{k} x_{i}\right) u_{k} = \left(u_{k 1} x_{i 1} + u_{k 2} x_{i 2} + ... + u_{k m} x_{i m}\right) u_{k}\) (it is a number \(u^\top_{k} x_{i}\) multiplied by a vector \(u_{k}\)). Since \(u_{k}\) is a unit vector, the length of the projection is \(u^\top_{k} x_{i}\).

Math Note

📗 The dot product between two vectors \(a = \left(a_{1}, a_{2}, ..., a_{m}\right)\) and \(b = \left(b_{1}, b_{2}, ..., b_{m}\right)\) is usually written as \(a \cdot b = a^\top b = \begin{bmatrix} a_{1} & a_{2} & ... & a_{m} \end{bmatrix} \begin{bmatrix} b_{1} \\ b_{2} \\ ... \\ b_{m} \end{bmatrix} = a_{1} b_{1} + a_{2} b_{2} + ... + a_{m} b_{m}\). In this course, to avoid confusion with scalar multiplication, the notation \(a^\top b\) will be used instead of \(a \cdot b\).

📗 If \(x_{i}\) is projected onto some vector \(u_{k}\) that is not a unit vector, then the formula for projection is \(\left(\dfrac{u^\top_{k} x_{i}}{u^\top_{k} u_{k}}\right) u_{k}\). Since for unit vector \(u_{k}\), \(u^\top_{k} u_{k} = 1\), the two formulas are equivalent.

TopHat Discussion

📗 [1 points] Compute the projection of the red vector onto the blue vector (drag the tips of the red or blue arrow, the green arrow represents the projection).

Red vector: , blue vector: .

Unit red vector: , unit blue vector: .

Projection: , length of projection: .

# Statistics

📗 The (unbiased) estimate of the variance of \(x_{1}, x_{2}, ..., x_{n}\) in one dimensional space (\(m = 1\)) is \(\dfrac{1}{n - 1} \left(\left(x_{1} - \mu\right)^{2} + \left(x_{2} - \mu\right)^{2} + ... + \left(x_{n} - \mu\right)^{2}\right)\), where \(\mu\) is the estimate of the mean (average) or \(\mu = \dfrac{1}{n} \left(x_{1} + x_{2} + ... + x_{n}\right)\). The maximum likelihood estimate has \(\dfrac{1}{n}\) instead of \(\dfrac{1}{n-1}\).

📗 In higher dimensional space, the estimate of the variance is \(\dfrac{1}{n - 1} \left(\left(x_{1} - \mu\right)\left(x_{1} - \mu\right)^\top + \left(x_{2} - \mu\right)\left(x_{2} - \mu\right)^\top + ... + \left(x_{n} - \mu\right)\left(x_{n} - \mu\right)^\top\right)\). Note that \(\mu\) is an \(m\) dimensional vector, and each of the \(\left(x_{i} - \mu\right)\left(x_{i} - \mu\right)^\top\) is an \(m\) by \(m\) matrix, so the resulting variance estimate is a matrix called variance-covariance matrix.

📗 If \(\mu = 0\), then the projected variance of \(x_{1}, x_{2}, ..., x_{n}\) in the direction \(u_{k}\) can be computed by \(u^\top_{k} \Sigma u_{k}\) where \(\Sigma = \dfrac{1}{n - 1} X^\top X\), and \(X\) is the data matrix where row \(i\) is \(x_{i}\).

➩ If \(\mu \neq 0\), then \(X\) should be centered, that is, the mean of each column should be subtracted from each column.

Math Note

📗 The projected variance formula can be derived by \(u^\top_{k} \Sigma u_{k} = \dfrac{1}{n - 1} u^\top_{k} X^\top X u_{k} = \dfrac{1}{n - 1} \left(\left(u^\top_{k} x_{1}\right)^{2} + \left(u^\top_{k} x_{2}\right)^{2} + ... + \left(u^\top_{k} x_{n}\right)^{2}\right)\) which is the estimate of the variance of the projection of the data in the \(u_{k}\) direction.

# Principal Component Analysis

📗 The goal is to find the direction that maximizes the projected variance: \(\displaystyle\max_{u_{k}} u^\top_{k} \Sigma u_{k}\) subject to \(u^\top_{k} u_{k} = 1\).

➩ This constrained maximization problem has solution (local maxima) \(u_{k}\) that satisfies \(\Sigma u_{k} = \lambda u_{k}\), and by definition of eigenvalues, \(u_{k}\) is the eigenvector corresponding to the eigenvalue \(\lambda\) for the matrix \(\Sigma\): Wikipedia.

➩ At a solution, \(u^\top_{k} \Sigma u_{k} = u^\top_{k} \lambda u_{k} = \lambda u^\top_{k} u_{k} = \lambda\), which means, the larger the \(\lambda\), the larger the variability in the direction of \(u_{k}\).

➩ Therefore, if all eigenvalues of \(\Sigma\) are computed and sorted \(\lambda_{1} \geq \lambda_{2} \geq ... \geq \lambda_{m}\), then the corresponding eigenvectors are the principal components: \(u_{1}\) is the first principal component corresponding to the direction of the largest variability; \(u_{2}\) is the second principal component corresponding to the direction of the second largest variability orthogonal to \(u_{1}\), ...

TopHat Quiz

(Past Exam Question) ID:📗 [3 points] Given the variance matrix \(\hat{\Sigma}\) = , what is the first principal component? Enter a unit vector.

📗 Answer (comma separated vector): .

# Number of Dimensions

📗 There are a few ways to choose the number of principal components \(K\).

➩ \(K\) can be selected based on prior knowledge or requirement (for example, \(K = 2, 3\) for visualization tasks).

➩ \(K\) can be the number of non-zero eigenvalues.

➩ \(K\) can be the number of eigenvalues that are larger than some threshold.

# Reduced Feature Space

📗 An original item is in the \(m\) dimensional feature space: \(x_{i} = \left(x_{i 1}, x_{i 2}, ..., x_{i m}\right)\).

📗 The new item is in the \(K\) dimensional space with basis \(u_{1}, u_{2}, ..., u_{k}\) has coordinates equal to the projected lengths of the original item: \(\left(u^\top_{1} x_{i}, u^\top_{2} x_{i}, ..., u^\top_{k} x_{i}\right)\).

📗 Other supervised learning algorithms can be applied on the new features.

TopHat Quiz

(Past Exam Question) ID:📗 [2 points] You performed PCA (Principal Component Analysis) in \(\mathbb{R}^{3}\). If the first principal component is \(u_{1}\) = \(\approx\) and the second principal component is \(u_{2}\) = \(\approx\) . What is the new 2D coordinates (new features created by PCA) for the point \(x\) = ?

📗 In the diagram, the black axes are the original axes, the green axes are the PCA axes, the red vector is \(x\), the red point is the reconstruction \(\hat{x}\) using the PCA axes.

📗 Answer (comma separated vector): .

# Reconstruction

📗 The original item can be reconstructed using the principal components. If all \(m\) principal components are used, then the original item can be perfectly reconstructed: \(x_{i} = \left(u^\top_{1} x_{i}\right) u_{1} + \left(u^\top_{2} x_{i}\right) u_{2} + ... + \left(u^\top_{m} x_{i}\right) u_{m}\).

📗 The original item can be approximated by the first \(K\) principal components: \(x_{i} \approx \left(u^\top_{1} x_{i}\right) u_{1} + \left(u^\top_{2} x_{i}\right) u_{2} + ... + \left(u^\top_{K} x_{i}\right) u_{K}\).

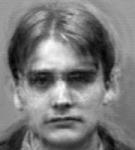

➩ Eigenfaces are eigenvectors of face images: every face can be written as a linear combination of eigenfaces. The first \(K\) eigenfaces and their coefficients can be used to determine and reconstruct specific faces: Link, Wikipedia.

Example

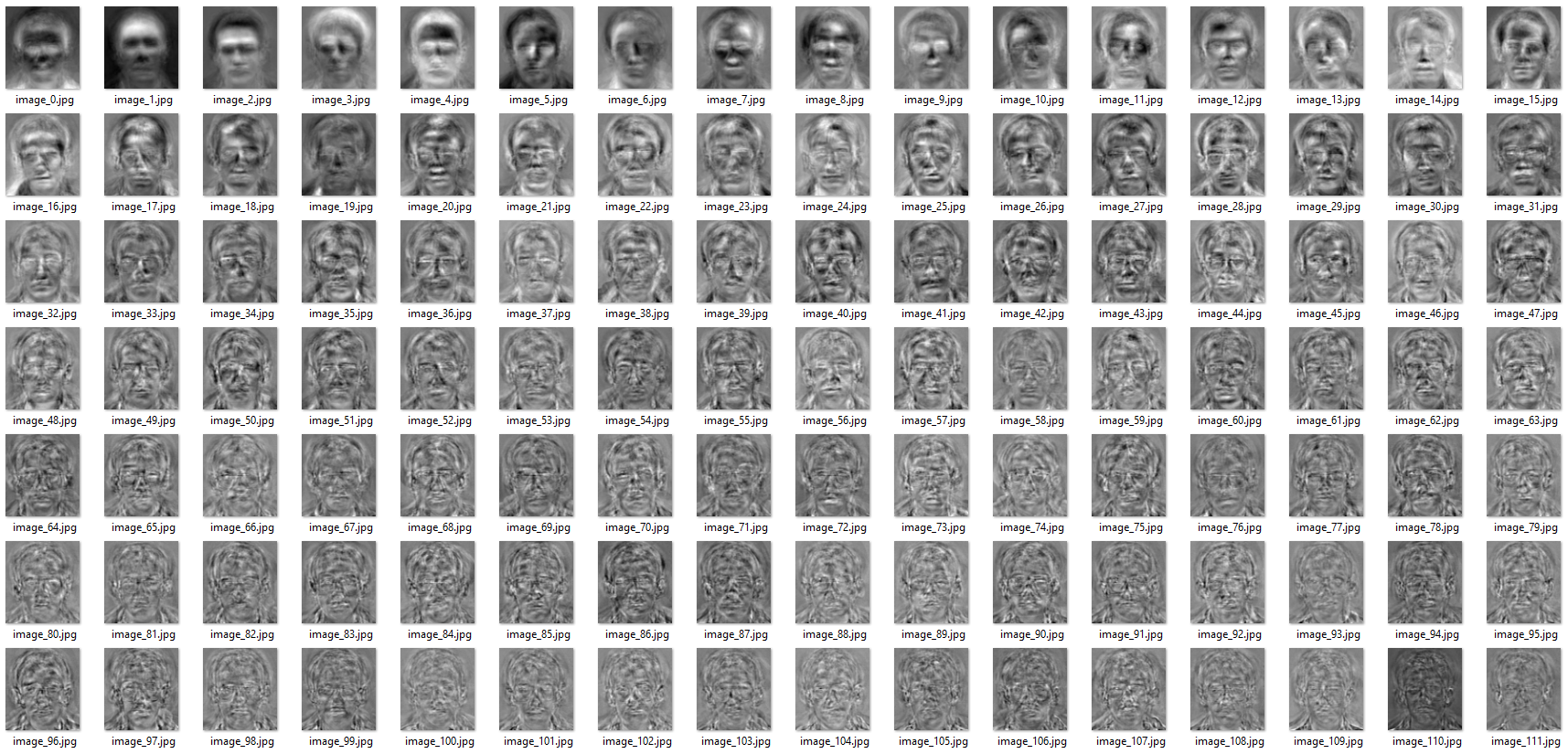

📗 An example of 111 eigenfaces:

📗 Reconstruction examples:

| K | Face 1 | Face 2 |

| 1 |

|

|

| 20 |

|

|

| 40 |

|

|

| 50 |

|

|

| 80 |

|

|

➩ Images by wellecks

# Non-linear PCA

📗 PCA features are linear in the original features.

📗 The kernel trick (used in support vector machines) can be used to first produce new features that are non-linear in the original features, then PCA can be applied to the new features: Wikipedia

➩ Dual optimization techniques can be used for kernels such as the RBF kernel which produces infinite number of new features.

📗 A neural network with both input and output being the features (no soft-max layer) is can be used for non-linear dimensionality reduction, called an auto-encoder: Wikipedia.

➩ The hidden units (\(K < m\) units, fewer than the number of input features) can be considered a lower dimensional representation of the original features.

# Graph-Based Clustering

📗 Given a graph \(G = \left(V, E\right)\):

➩ The vertices are items.

➩ The edges encode similarity between items, for example, k-nearest neighbor graph (unweighted, \(w_{ij} = 1\) if \(i\) is one of the k nearest neighbors of \(j\) and \(0\) otherwise), or fully connected similarity graph (weighted, \(w_{ij} = \exp\left(- \dfrac{\left\|x_{i} - x_{j}\right\|^{2}}{2 \sigma^{2}}\right)\)).

📗 The goal is to cut (partition) the graph (node set) \(V\) into \(C_{1}, C_{2}, ..., C_{K}\) in a way that:

➩ Minimizes the weight of the cut: \(\dfrac{1}{2} \displaystyle\sum_{k=1}^{K} \displaystyle\sum_{i \in C_{k}, j \notin C_{k}} w_{ij}\).

➩ Minimizes the normalized cut: \(\dfrac{1}{2} \displaystyle\sum_{k=1}^{K} \dfrac{1}{\displaystyle\sum_{i \in C_{k}} \text{deg}\left(i\right)} \displaystyle\sum_{i \in C_{k}, j \notin C_{k}} w_{ij}\). The cut cost is normalized by how big the cluster is to avoid cutting off small clusters.

TopHat Discussion

ID:📗 [1 points] Write down the adjacency matrix and degree matrix formed by :

➩ For K nearest neighbor, \(k\) = .

➩ For Gaussian weights, \(\sigma\) = .

TopHat Quiz

ID:📗 [3 points] Compute the cut and normalized cut of the edge between nodes \(1\) and .

📗 Answer (comma separated vector):

# Graph Laplacian

📗 Laplacian in calculus is \(\Delta f = \displaystyle\sum_{i} \dfrac{\partial^2 f}{\partial x_{i}^2}\) that measures how the average value of the function around a point differs from the value at that point.

📗 Laplacian of a graph is \(L = D - A\) that measures how the average value of the neighbors differs from the value at that node.

➩ \(A\) is the adjacency matrix (or edge weight matrix).

➩ \(D\) is the diagonal matrix with node degrees (or \(D_{ii} = \displaystyle\sum_{j} A_{ij}\)) on the diagonal.

➩ An alternative is to use normalized Laplacian \(L = I - D^{- \dfrac{1}{2}} A D^{- \dfrac{1}{2}}\): Link.

TopHat Quiz

ID:📗 [3 points] Compute the graph Laplacian of the following graph?

📗 Answer (matrix with multiple lines, each line is a comma separated vector):

# Spectral Clustering

📗 Compute Laplacian or normalized Laplacian \(L\).

📗 Sort eigenvalues in descending order and bottom K non-zero eigenvectors of \(L\): \(u_{1}, u_{2}, ..., u_{K}\).

📗 Set \(U\) to be \(n \times K\) matrix with columns \(\left[u_{1}, u_{2}, ..., u_{K}\right]\).

📗 Run k-means on the rows of \(U = \begin{bmatrix} x_{1} \\ x_{2} \\ ... \\ x_{n} \end{bmatrix}\).

➩ Spectral clustering is similar to PCA in that it reduces the dimensions of the original points (from \(m\) to \(K\)).

➩ Both use eigenvalues (PCA uses the largest K, Spectral Clustering uses the smallest k) but on different matrices (PCA uses covariance matrix, Spectral Clustering uses Laplacian): Link.

📗 Comparison with other clustering algorithms: Link.

Math Note

📗 Intuitively, minimizing cut is related to minimizing \(u^\top L u\) subject to \(\left\|u\right\| = 1\).

# T-distributed Stochastic Neighbor Embedding

📗 A common application of dimensionality reduction is for visualization into \(K = 2, 3\) dimensions.

📗 T-SNE (T-distributed Stochastic Neighbor Embedding) converts high dimensional vectors into \(K = 2, 3\) dimensonal vectors by:

➩ Turn vectors into probability pairs.

➩ Turn pairs back into low dimensional vectors.

# Probability of Neighbor

📗 The similarity between \(x_{i}\) and \(x_{j}\) is measured by the probability that \(x_{i}\) would pick \(x_{j}\) as its neighbor under a Gaussian probability.

➩ \(p_{ij} = \dfrac{1}{2 n} \left(p_{j | i} + p_{i | j}\right)\), where,

➩ \(p_{i | j} = \dfrac{\exp\left(- \dfrac{\left\|x_{i} - x_{j}\right\|^{2}}{2 \sigma_{j}^{2}}\right)}{\displaystyle\sum_{k \neq j} \exp\left(- \dfrac{\left\|x_{i} - x_{k}\right\|^{2}}{2 \sigma_{j}^{2}}\right)}\) and \(p_{j | i} = \dfrac{\exp\left(- \dfrac{\left\|x_{i} - x_{j}\right\|^{2}}{2 \sigma_{i}^{2}}\right)}{\displaystyle\sum_{k \neq i} \exp\left(- \dfrac{\left\|x_{i} - x_{k}\right\|^{2}}{2 \sigma_{i}^{2}}\right)}\).

📗 A parameter called perplexity is used to determine \(\sigma_{i}\).

➩ Small perplexity = small \(\sigma_{i}\) = local neighbors.

➩ Large perplexity = large \(\sigma_{i}\) = global neighbors.

➩ Perplexity measures the "effective" number of neighbors.

# Low Dimensional Space

📗 Place points randomly in \(K = 2, 3\) dimensional space.

📗 Use T distribution (1 degree of freedom) to measure similarity:

➩ \(q_{ij} = \dfrac{\left(1 + \left\|y_{i} - y_{j}\right\|^{2}\right)^{-1}}{\displaystyle\sum_{k \neq l} \left(1 + \left\|y_{i} - y_{j}\right\|^{2}\right)^{-1}}\).

# Kullback Leibler Divergence

📗 Gaussian distribution is used in the original space (light tail).

📗 T distribution is used in the low dimensional space (heavy tail, so that points are not crowded).

📗 The objective is to choose the new points \(y_{i}\) so that \(D = \displaystyle\sum_{ij} p_{ij} \log \left(\dfrac{p_{ij}}{q_{ij}}\right)\) is minimized.

➩ \(D\) is called the KL (Kullback Leibler) Divergence and measures the similarity between two distributions (similar to cross entropy).

📗 Gradient descent can be used for minimization.

test pc,pt,pj,es,pn,gc,nl,lap q

📗 Notes and code adapted from the course taught by Professors Blerina Gkotse, Jerry Zhu, Yudong Chen, Yingyu Liang, Charles Dyer.

📗 If there is an issue with TopHat during the lectures, please submit your answers on paper (include your Wisc ID and answers) or this Google form Link at the end of the lecture.

📗 Anonymous feedback can be submitted to: Form. Non-anonymous feedback and questions can be posted on Piazza: Link

Prev: W1, Next: W3

Last Updated: May 13, 2026 at 10:36 PM